I gave a talk at Glug London last week, where I discussed something that’s been on my mind at least since 2007, when I last talked about it briefly at Interesting.

It is rearing its head in our work, and in work and writings by others – so thought I would give it another airing.

The talk at Glug London bounced through some of our work, and our collective obsession with Mary Poppins, so I’ll cut to the bit about the Robot-Readable World, and rather than try and reproduce the talk I’ll embed the images I showed that evening, but embellish and expand on what I was trying to point at.

Robot-Readable World is a pot to put things in, something that I first started putting things in back in 2007 or so.

At Interesting back then, I drew a parallel between the Apple Newton’s sophisticated, complicated hand-writing recognition and the Palm Pilot’s approach of getting humans to learn a new way to write, i.e. Graffiti.

The connection I was trying to make was that there is a deliberate design approach that makes use of the plasticity and adaptability of humans to meet computers (more than) half way.

Connecting this to computer vision and robotics I said something like:

“What if, instead of designing computers and robots that relate to what we can see, we meet them half-way – covering our environment with markers, codes and RFIDs, making a robot-readable world”

After that I ran a little session at FooCamp in 2009 called “Robot readable world (AR shouldn’t just be for humans)” which was a bit ill-defined and caught up in the early hype of augmented reality…

But the phrase and the thought has been nagging at me ever since.

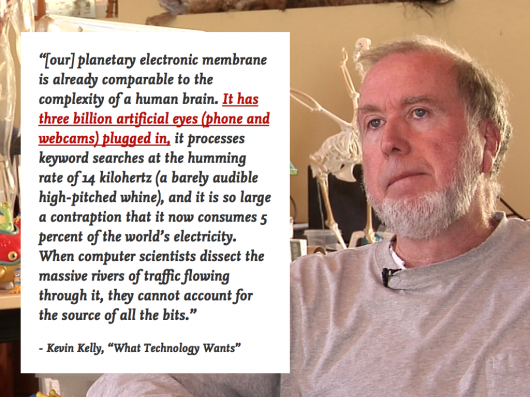

I read Kevin Kelly’s “What technology wants” recently, and this quote popped out at me:

Three billion artificial eyes!

In zoologist Andrew Parker’s 2003 book “In the blink of an eye” he outlines ‘The Light Switch Theory’.

“The Cambrian explosion was triggered by the sudden evolution of vision” in simple organisms… active predation became possible with the advent of vision, and prey species found themselves under extreme pressure to adapt in ways that would make them less likely to be spotted. New habitats opened as organisms were able to see their environment for the first time, and an enormous amount of specialization occurred as species differentiated.”

In this light (no pun intended) the “Robot-Readable World” imagines the evolutionary pressure of those three billion (and growing) linked, artificial eyes on our environment.

It imagines a new aesthetic born out of that pressure.

As I wrote in “Sensor-Vernacular”

[it is an aesthetic…] Of computer-vision, of 3d-printing; of optimised, algorithmic sensor sweeps and compression artefacts. Of LIDAR and laser-speckle. Of the gaze of another nature on ours. There’s something in the kinect-hacked photography of NYC’s subways that we’ve linked to here before, that smacks of the viewpoint of that other next nature, the robot-readable world. The fascination we have with how bees see flowers, revealing animal link between senses and motives. That our environment is shared with things that see with motives we have intentionally or unintentionally programmed them with.

The things we are about to share our environment with are born themselves out of a domestication of inexpensive computation, the ‘Fractional AI’ and ‘Big Maths for trivial things’ that Matt Webb has spoken about this year (I’d recommend starting at his Do Lecture).

And, as he’s also said before – it is a plausible, purchasable near-future that can be read in the catalogues of discount retailers as well as the short stories of speculative fiction writers.

We’re in a present, after all, where a £100 point-and-shoot camera has the approximate empathic capabilities of a infant, recognising and modifying it’s behaviour based on facial recognition.

And where the number one toy last Christmas is a computer-vision eye that can sense depth, movement, detect skeletons and is a direct descendent of techniques and technologies used for surveillance and monitoring.

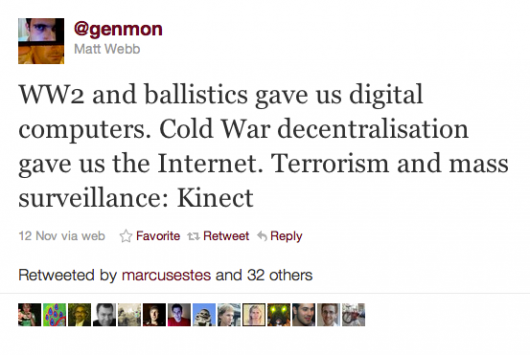

As Matt Webb pointed out on twitter last year:

Ten years of investment in security measures funded and inspired by the ‘War On Terror’ have lead us to this point, but what has been left behind by that tide is domestic, cheap and hackable.

Kinect hacking has become officially endorsed and, to my mind, the hacks are more fun than the games that have published for it.

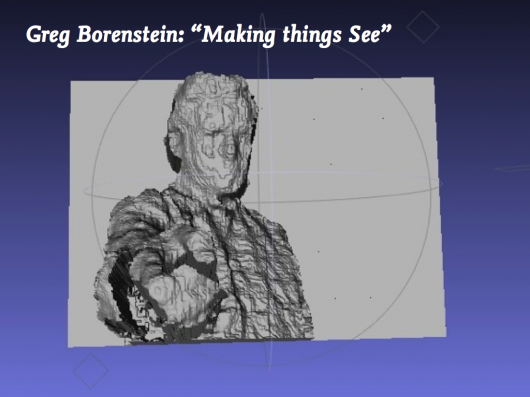

Greg Borenstein, who scanned me with a Kinect at FooCamp is at the moment writing a book for O’Reilly called ‘Making Things See’.

It is a companion in someways to Tom Igoe’s handbook to injecting behaviour into everyday things with Arduino and other hackable, programmable hardware called “Making Things Talk”.

“Making Things See” could be the the beginning of a ‘light-switch’ moment for everyday things with behaviour hacked-into them. For things with fractional AI, fractional agency – to be given a fractional sense of their environment.

Again, I wrote a little bit about that in “Sensor-Vernacular”, and the above image by James George & Alexander Porter still pins that feeling for me.

The way the world is fractured from a different viewpoint, a different set of senses from a new set of sensors.

Perhaps it’s the suspicious look from the fella with the moustache that nails it.

And its a thought that was with me while I wrote that post that I want to pick at.

The fascination we have with how bees see flowers, revealing the animal link between senses and motives. That our environment is shared with things that see with motives we have intentionally or unintentionally programmed them with.

Which leads me to Richard Dawkins.

Richard Dawkins talks about how we have evolved to live ‘in the middle’ (http://www.ted.com/talks/richard_dawkins_on_our_queer_universe.html) and our sensorium defines our relationship to this ‘Middle World’

“What we see of the real world is not the unvarnished world but a model of the world, regulated and adjusted by sense data, but constructed so it’s useful for dealing with the real world.

The nature of the model depends on the kind of animal we are. A flying animal needs a different kind of model from a walking, climbing or swimming animal. A monkey’s brain must have software capable of simulating a three-dimensional world of branches and trunks. A mole’s software for constructing models of its world will be customized for underground use. A water strider’s brain doesn’t need 3D software at all, since it lives on the surface of the pond in an Edwin Abbott flatland.”

Middle World — the range of sizes and speeds which we have evolved to feel intuitively comfortable with –is a bit like the narrow range of the electromagnetic spectrum that we see as light of various colours. We’re blind to all frequencies outside that, unless we use instruments to help us. Middle World is the narrow range of reality which we judge to be normal, as opposed to the queerness of the very small, the very large and the very fast.”

At the Glug London talk, I showed a short clip of Dawkins’ 1991 RI Christmas Lecture “The Ultraviolet Garden”. The bit we’re interested in starts about 8 minutes in – but the whole thing is great.

In that bit he talks about how flowers have evolved to become attractive to bees, hummingbirds and humans – all occupying separate sensory worlds…

Which leads me back to…

What’s evolving to become ‘attractive’ and meaningful to both robot and human eyes?

Also – as Dawkins points out

The nature of the model depends on the kind of animal we are.

That is, to say ‘robot eyes’ is like saying ‘animal eyes’ – the breadth of speciation in the fourth kingdom will lead to a huge breadth of sensory worlds to design within.

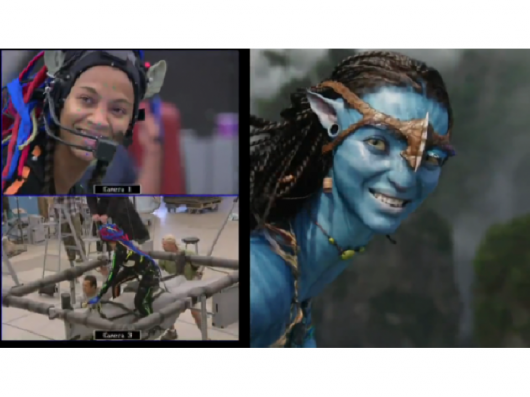

One might look for signs in the world of motion-capture special effects, where Zoe Saldana’s chromakey acne and high-viz dreadlocks that transform here into an alien giantess in Avatar could morph into fashion statements alongside Beyoncé’s chromasocks…

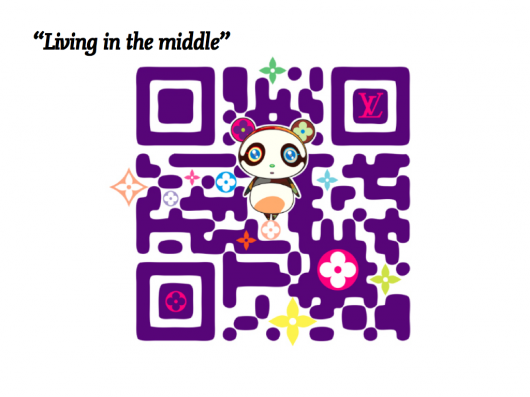

Or Takashi Murakami’s illustrative QR codes for Louis Vuitton.

That a such a bluntly digital format such as a QR code can be appropriated by a luxury brand such as LV is notable by itself.

Since the talk at Glug London, Timo found a lovely piece of work featured by BLDGBLOG by Diego Trujillo-Pisanty who is a student on the Design Interactions course at the RCA that I sometimes teach at.

Diego’s project “With Robots” imagines a domestic scene where objects, furniture and the general environment have been modified for robot senses and affordances.

Another recent RCA project, this time from the Design Products course, looks at fashion in a robot-readable world.

Thorunn Arnadottir’s QR-code beaded dresses and sunglasses imagine a scenario where pop-stars inject payloads of their own marketing messages into the photographs taken by paparazzi via readable codes turning the parasites into hosts.

But, such overt signalling to distinct and separate senses of human and robots is perhaps too clean-cut an approach.

Computer vision is a deep, dark specialism with strange opportunities and constraints. The signals that we design towards robots might be both simpler and more sophisticated than QR codes or other 2d barcodes.

Timo has pointed us towards Maya Lotanʼs work from Ivrea back in 2005. He neatly frames what may be the near-future of the Robot-Readable World:

Those QR ‘illustrations’ are gaining attention because they are novel. They are cheap, early and ugly computer-readable illustration, one side of an evolutionary pressure towards a robot-readable world. In the other direction, images of paintings, faces, book covers and buildings are becoming ‘known’ through the internet and huge databases. Somewhere they may meet in the middle, and we may have beautiful hybrids such as http://www.mayalotan.com/urbanseeder-thesis/inside/

In our own work with Dentsu – the Suwappu characters are being designed to be attractive and cute to humans and meaningful to computer vision.

Their bodies are being deliberately gauged to register with a computer vision application, so that they can interact with imaginary storylines and environments generated by the smartphone.

Back to Dawkins.

Living in the middle means that our limited human sensoriums and their specialised, superhuman robotic senses will overlap, combine and contrast.

Wavelengths we can’t see can be overlaid on those we can – creating messages for both of us.

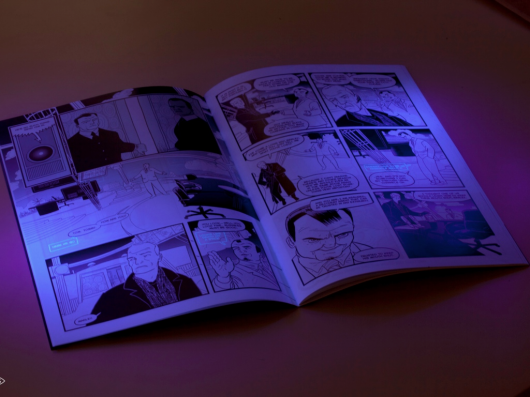

SVK wasn’t created for robots to read, but it shows how UV wavelengths might be used to create an alternate hidden layer to be read by eyes that see the world in a wider range of wavelengths.

Timo and Jack call this “Antiflage” – a made-up word for something we’re just starting to play with.

It is the opposite of camouflage – the markings and shapes that attract and beguile robot eyes that see differently to us – just as Dawkins describes the strategies that flowers and plants have built up over evolutionary time to attract and beguile bees, hummingbirds – and exist in a layer of reality complimentary to that which we humans sense and are beguiled by.

And I guess that’s the recurring theme here – that these layers might not be hidden from us just by dint of their encoding, but by the fact that we don’t have the senses to detect them without technological-enhancement.

I say a recurring theme as it’s at the core of the Immaterials work that Jack and Timo did with RFID – looking to bring these phenomena into our “Middle World” as materials to design with.

And while I present this as a phenomena, and dramatise it a little into being an emergent ‘force of nature’, let’s be clear that it is a phenomena to design for, and with. It’s something we will invent, within the frame of the cultural and technical pressures that force design to evolve.

That was the message I was trying to get across at Glug: we’re the ones making the robots, shaping their senses, and the objects and environments they relate to.

Hence we make a robot-readable world.

I closed my talk with this quote from my friend Chris Heathcote, which I thought goes to the heart of this responsibility.

There’s a whiff in the air that it’s not as far off as we might think.

The Robot-Readable World is pre-Cambrian at the moment, but perhaps in a blink of an eye it will be all around us.

This thought is a shared one – that has emerged from conversations with Matt Webb, Jack, Timo, and Nick in the studio – and Kevin Slavin (watch his recent, brilliant TED talk if you haven’t already), Noam Toran, James Auger, Ben Cerveny, Matt Biddulph, Greg Borenstein, James George, Tom Igoe, Kevin Grennan, Natalie Jeremijenko, Russell Davies, James Bridle (who will be giving a talk this October with the title ‘Robot-readable world’ and will no doubt take it to further and wilder places far more eloquently than I ever could), Tom Armitage and many others over the last few years.

If you’re tracking the Robot-Readable World too, let me know in comments here – or the hashtag #robotreadableworld.